Bifrost is a high-performance AI gateway that unifies access to 15+ providers (OpenAI, Anthropic, AWS Bedrock, Google Vertex, and more) through a single OpenAI-compatible API. Deploy in seconds with zero configuration and get automatic failover, load balancing, semantic caching, and enterprise-grade features.

Go from zero to production-ready AI gateway in under a minute.

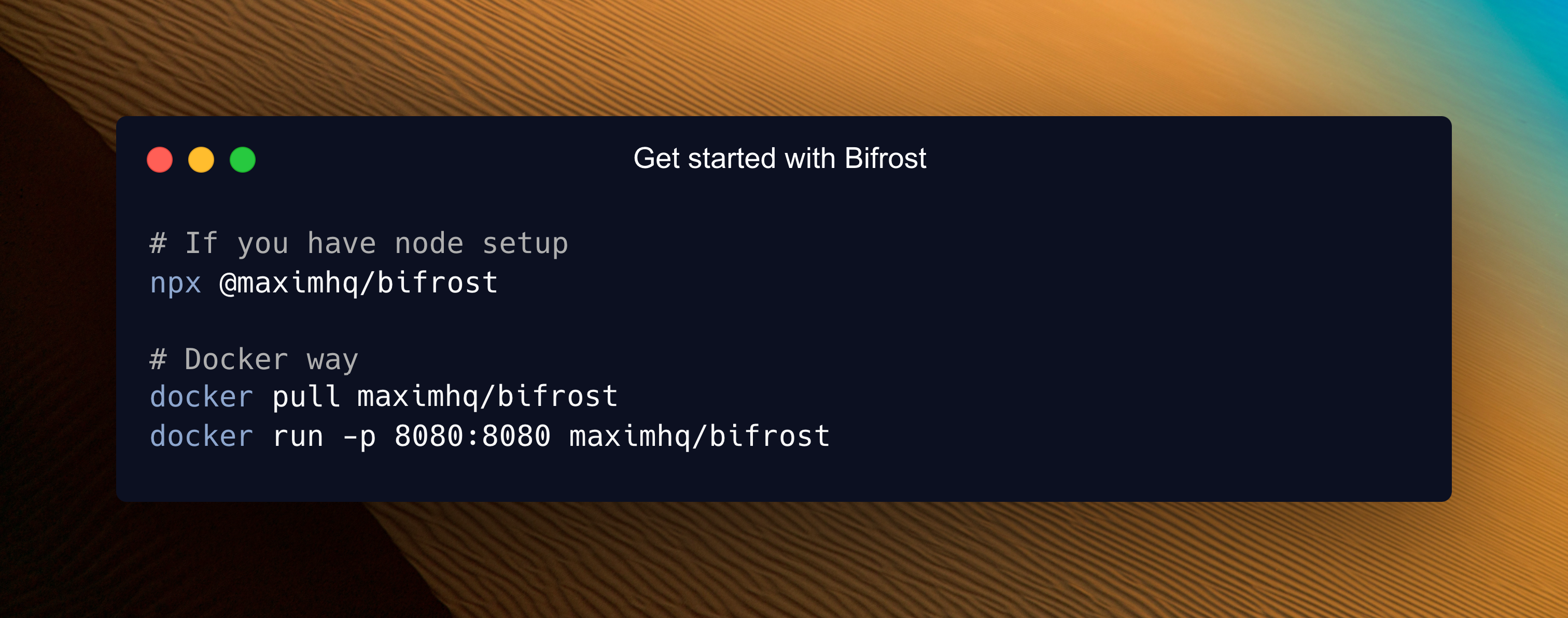

Step 1: Start Bifrost Gateway

# Install and run locally

npx -y @maximhq/bifrost

# Or use Docker

docker run -p 8080:8080 maximhq/bifrostStep 2: Configure via Web UI

# Open the built-in web interface

open http://localhost:8080Step 3: Make your first API call

curl -X POST http://localhost:8080/v1/chat/completions \

-H "Content-Type: application/json" \

-d '{

"model": "openai/gpt-4o-mini",

"messages": [{"role": "user", "content": "Hello, Bifrost!"}]

}'That's it! Your AI gateway is running with a web interface for visual configuration, real-time monitoring, and analytics.

Complete Setup Guides:

- Gateway Setup - HTTP API deployment

- Go SDK Setup - Direct integration

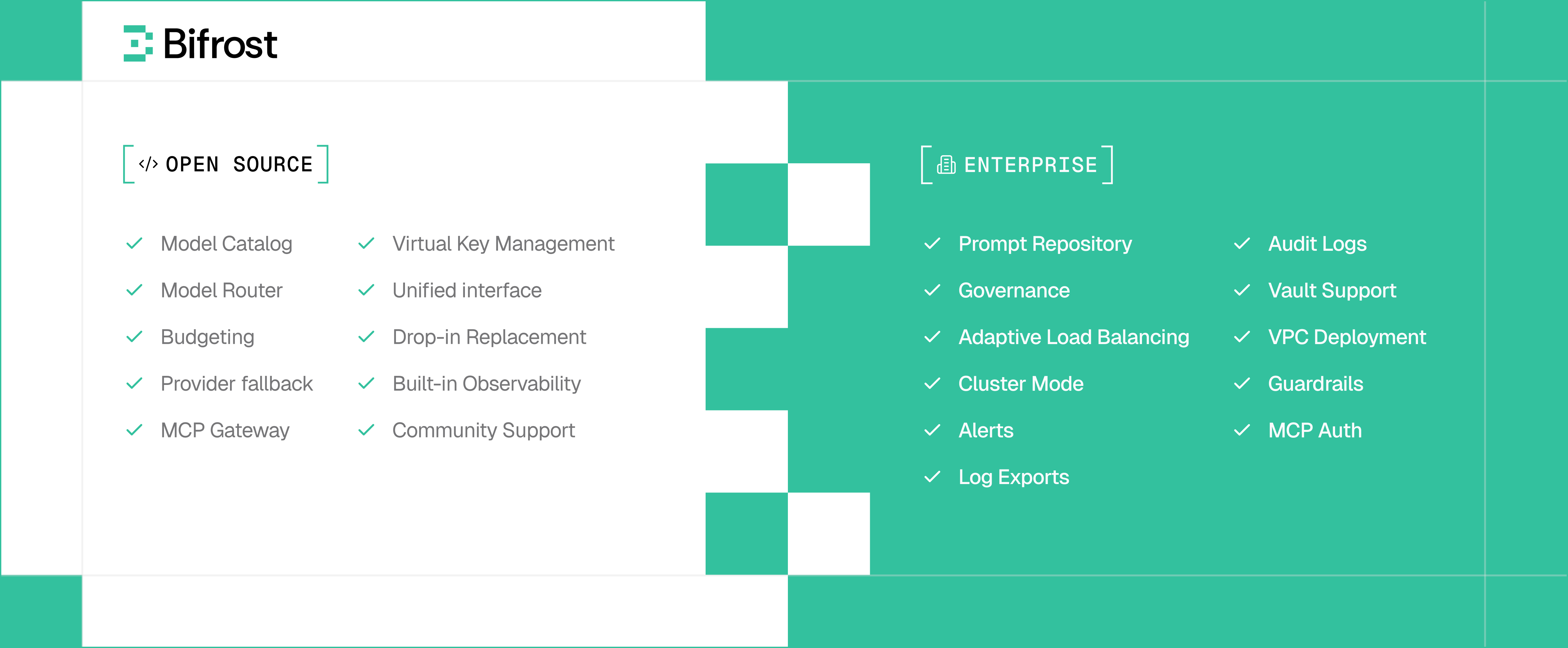

Bifrost supports enterprise-grade, private deployments for teams running production AI systems at scale. In addition to private networking, custom security controls, and governance, enterprise deployments unlock advanced capabilities including adaptive load balancing, clustering, guardrails, MCP gateway and and other features designed for enterprise-grade scale and reliability.

- Unified Interface - Single OpenAI-compatible API for all providers

- Multi-Provider Support - OpenAI, Anthropic, AWS Bedrock, Google Vertex, Azure, Cerebras, Cohere, Mistral, Ollama, Groq, and more

- Automatic Fallbacks - Seamless failover between providers and models with zero downtime

- Load Balancing - Intelligent request distribution across multiple API keys and providers

- Model Context Protocol (MCP) - Enable AI models to use external tools (filesystem, web search, databases)

- Semantic Caching - Intelligent response caching based on semantic similarity to reduce costs and latency

- Multimodal Support - Support for text,images, audio, and streaming, all behind a common interface.

- Custom Plugins - Extensible middleware architecture for analytics, monitoring, and custom logic

- Governance - Usage tracking, rate limiting, and fine-grained access control

- Budget Management - Hierarchical cost control with virtual keys, teams, and customer budgets

- SSO Integration - Google and GitHub authentication support

- Observability - Native Prometheus metrics, distributed tracing, and comprehensive logging

- Vault Support - Secure API key management with HashiCorp Vault integration

- Zero-Config Startup - Start immediately with dynamic provider configuration

- Drop-in Replacement - Replace OpenAI/Anthropic/GenAI APIs with one line of code

- SDK Integrations - Native support for popular AI SDKs with zero code changes

- Configuration Flexibility - Web UI, API-driven, or file-based configuration options

Bifrost uses a modular architecture for maximum flexibility:

bifrost/

├── npx/ # NPX script for easy installation

├── core/ # Core functionality and shared components

│ ├── providers/ # Provider-specific implementations (OpenAI, Anthropic, etc.)

│ ├── schemas/ # Interfaces and structs used throughout Bifrost

│ └── bifrost.go # Main Bifrost implementation

├── framework/ # Framework components for data persistence

│ ├── configstore/ # Configuration storages

│ ├── logstore/ # Request logging storages

│ └── vectorstore/ # Vector storages

├── transports/ # HTTP gateway and other interface layers

│ └── bifrost-http/ # HTTP transport implementation

├── ui/ # Web interface for HTTP gateway

├── plugins/ # Extensible plugin system

│ ├── governance/ # Budget management and access control

│ ├── jsonparser/ # JSON parsing and manipulation utilities

│ ├── logging/ # Request logging and analytics

│ ├── maxim/ # Maxim's observability integration

│ ├── mocker/ # Mock responses for testing and development

│ ├── semanticcache/ # Intelligent response caching

│ └── telemetry/ # Monitoring and observability

├── docs/ # Documentation and guides

└── tests/ # Comprehensive test suites

Choose the deployment method that fits your needs:

Best for: Language-agnostic integration, microservices, and production deployments

# NPX - Get started in 30 seconds

npx -y @maximhq/bifrost

# Docker - Production ready

docker run -p 8080:8080 -v $(pwd)/data:/app/data maximhq/bifrostFeatures: Web UI, real-time monitoring, multi-provider management, zero-config startup

Learn More: Gateway Setup Guide

Best for: Direct Go integration with maximum performance and control

go get github.com/maximhq/bifrost/coreFeatures: Native Go APIs, embedded deployment, custom middleware integration

Learn More: Go SDK Guide

Best for: Migrating existing applications with zero code changes

# OpenAI SDK

- base_url = "https://api.openai.com"

+ base_url = "http://localhost:8080/openai"

# Anthropic SDK

- base_url = "https://api.anthropic.com"

+ base_url = "http://localhost:8080/anthropic"

# Google GenAI SDK

- api_endpoint = "https://generativelanguage.googleapis.com"

+ api_endpoint = "http://localhost:8080/genai"Learn More: Integration Guides

Bifrost adds virtually zero overhead to your AI requests. In sustained 5,000 RPS benchmarks, the gateway added only 11 µs of overhead per request.

| Metric | t3.medium | t3.xlarge | Improvement |

|---|---|---|---|

| Added latency (Bifrost overhead) | 59 µs | 11 µs | -81% |

| Success rate @ 5k RPS | 100% | 100% | No failed requests |

| Avg. queue wait time | 47 µs | 1.67 µs | -96% |

| Avg. request latency (incl. provider) | 2.12 s | 1.61 s | -24% |

Key Performance Highlights:

- Perfect Success Rate - 100% request success rate even at 5k RPS

- Minimal Overhead - Less than 15 µs additional latency per request

- Efficient Queuing - Sub-microsecond average wait times

- Fast Key Selection - ~10 ns to pick weighted API keys

Complete Benchmarks: Performance Analysis

Complete Documentation: https://docs.getbifrost.ai

- Gateway Setup - HTTP API deployment in 30 seconds

- Go SDK Setup - Direct Go integration

- Provider Configuration - Multi-provider setup

- Multi-Provider Support - Single API for all providers

- MCP Integration - External tool calling

- Semantic Caching - Intelligent response caching

- Fallbacks & Load Balancing - Reliability features

- Budget Management - Cost control and governance

- OpenAI SDK - Drop-in OpenAI replacement

- Anthropic SDK - Drop-in Anthropic replacement

- AWS Bedrock SDK - AWS Bedrock integration

- Google GenAI SDK - Drop-in GenAI replacement

- LiteLLM SDK - LiteLLM integration

- Langchain SDK - Langchain integration

- Custom Plugins - Extend functionality

- Clustering - Multi-node deployment

- Vault Support - Secure key management

- Production Deployment - Scaling and monitoring

Join our Discord for community support and discussions.

Get help with:

- Quick setup assistance and troubleshooting

- Best practices and configuration tips

- Community discussions and support

- Real-time help with integrations

We welcome contributions of all kinds! See our Contributing Guide for:

- Setting up the development environment

- Code conventions and best practices

- How to submit pull requests

- Building and testing locally

For development requirements and build instructions, see our Development Setup Guide.

This project is licensed under the Apache 2.0 License - see the LICENSE file for details.

Built with ❤️ by Maxim